The Climate Damage Function Problem No One Wants to Admit

One of the most striking features of modern climate economics is not consensus, it’s dispersion. Depending on which paper, model, or administration you consult, the economic damages from climate change range from modest to catastrophic. The “social cost of carbon” alone has swung wildly, from roughly $190 per metric ton of emissions under the Biden administration to effectively zero under Trump.

A new paper by Finbar Curtin and Matthew Burgess, “The Empirically Inscrutable Climate-Economy Relationship,” argues that this dispersion is not a temporary problem awaiting better data or cleverer econometrics. It is, instead, a fundamental and irreducible feature of the enterprise. Their conclusion is uncomfortable: we cannot reliably estimate the macroeconomic damage from climate change using historical data.

The Identification Problem at the Core

Most empirical climate-economy models follow a similar structure. Researchers estimate how temperature (or other climate variables) has historically affected GDP, then feed projected future warming into that relationship to generate estimates of future economic damages.

The problem, Curtin and Burgess argue, is that this relationship is not stable or uniform, but varies dramatically across both space and time.

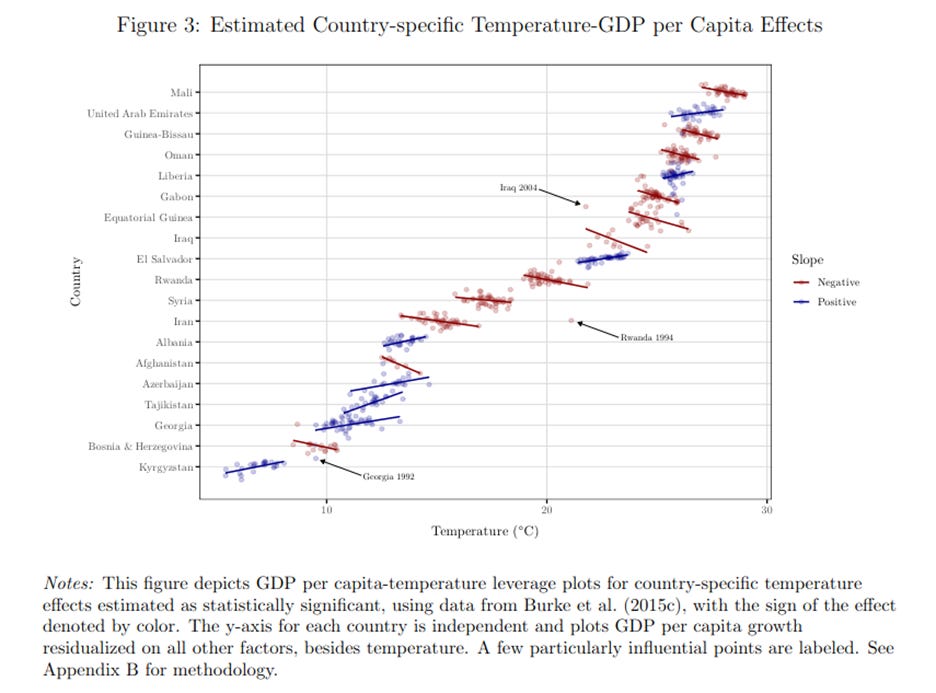

Countries with the same average temperature can have entirely different economic responses to climate variation. El Salvador and Iraq, for example, may share similar climates but have radically different economic structures, institutions, and adaptive capacities. Pooling them into a single regression implicitly assumes a common “damage function” that simply doesn’t exist (see Figure 3 below).

Time adds another layer of complexity. As countries grow richer, they adapt. Air conditioning, infrastructure, migration, and sectoral shifts all change how temperature affects economic output. That means the relationship between climate and GDP is itself evolving, precisely the kind of instability that undermines standard econometric identification.

Economists typically deal with this kind of heterogeneity by imposing simplifying assumptions. But here’s the catch: if you assume away too much variation, your model becomes misidentified. If you try to fully account for it, you run out of degrees of freedom and can’t estimate anything meaningful.

There’s no econometric trick that resolves this tradeoff. That’s why the authors describe the relationship as “empirically inscrutable.”

When a Few Data Points Drive the World

If the identification problem weren’t enough, the paper highlights another issue that should make anyone uneasy: extreme sensitivity to a handful of observations.

In one prominent study (Burke et al. 2015), removing just six data points out of more than 6,000 reduces the estimated climate effect by about 25 percent.

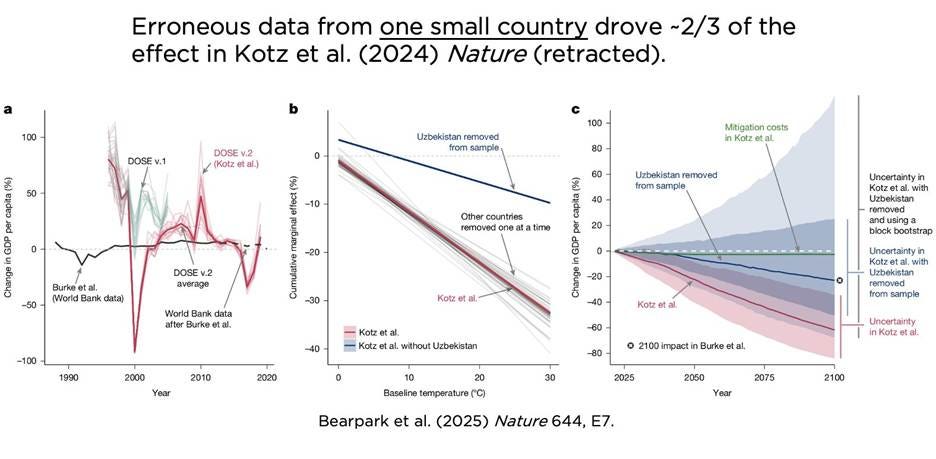

Even more striking is the example of a now-retracted Nature paper, where erroneous data from a single country, Uzbekistan, accounted for roughly two-thirds of the estimated global climate damage effect (see the 3 figures below). Mistakes do, of course, happen, but the deeper question is: how can one small country plausibly drive global conclusions in the first place?

The answer lies in how pooled regressions work. When countries are combined into a single statistical model, idiosyncratic events, like Rwanda’s genocide or Armenia’s post-Soviet collapse, can end up disproportionately influencing the estimated “global” relationship between climate and growth.

In effect, these models can imply that how a small, crisis-stricken country responds to a hot year tells us something meaningful about how the United States or Germany will respond to long-run climate change. That’s a leap few would defend if stated plainly.

So What Should We Do?

It’s important to be clear about what Curtin and Burgess are not saying. They are not claiming that climate change is harmless or that reducing emissions lacks value. The existence of a negative carbon externality remains well-established.

What they are challenging is the confidence with which economists, and especially policymakers, treat specific numerical estimates of climate damage.

If the underlying relationship cannot be reliably identified, then there is no single “correct” social cost of carbon. The wide range of estimates is not a temporary inconvenience but reflects a deep uncertainty that cannot be eliminated with better data or more sophisticated models.

Rather than pretending that we can fine-tune climate policy based on precise damage estimates, we should acknowledge the limits of our knowledge. This pushes us toward a framework of decision-making under deep uncertainty, where robustness, resilience, and flexibility take precedence over point estimates and optimal control.

There’s a broader takeaway here, one that extends beyond climate economics.

Policymakers often demand precise numbers, whether it’s the fiscal multiplier, the elasticity of taxable income, or the social cost of carbon. Economists, in turn, are tempted to provide them, even when the underlying uncertainty is substantial.

Only last year the Congressional Budget Office CBO published a paper that does precisely this, concluding that climate change would cause GDP to be 4 percent lower in 2100 than if temperatures had stayed constant. The CBO takes these studies into account when producing its long-term forecasts.

But precision is not the same as accuracy, and when models are fundamentally fragile, presenting point estimates can be more misleading than helpful. The Curtin-Burgess paper is a reminder that some questions may not yield to empirical methods as cleanly as we would like. In those cases, humility is not a weakness, it’s a necessity.

Trillion-dollar decisions deserve more than false precision.

"Policymakers often demand precise numbers, whether it’s the fiscal multiplier, the elasticity of taxable income, or the social cost of carbon."

Not often enough. :) Neither the CO2 emissions mitigation incentives in the IRA or the One Budget Bashing Bill revision of those incentives were guided by any estimate of the social cost of carbon.

There is a deeper problem than the one you are suggesting about estimating “the” proper social cost of carbon.

Well two different overlapping problems.

The first is that most public policy that seeks to make the economy better - said “social cost of carbon” in fact will almost certainly be net negative.

Just think about it logically, without numbers. If “climate change” is only going to reduce world economic output by 4% - hell, even 8% - in 75 years, then the correct answer economically is to DO NOTHING except developing some adaptation technology.

So the first issue is that there is immense uncertainty about the economic effects of “climate change”, and in all but the most disastrous (more in a moment),the prescribe cures surely are worse than the disease.

The fact that only the so-called tail risk is the case where different public policy could with any meaningful plausibility be economically worthwhile.

I.e. if the policy has a meaningful chance of avoiding xx degrees (2,3, 4 - you pick) of warming, which then accelerates.

Different people propose different estimates of this likelihood (some suggesting it is close to impossible). I claim no expertise whatsoever on this point.

Where I do claim the expertise of logic is that the public policy prescriptions of the left today - the ones they think the public might swallow - have a chance vanishingly close to zero of being the difference of avoiding that tipping point or not.

And so what the left is proposing is a “heads we (humanity) lose [because we waste enormous resources and reduce economic growth for no good reason], and tails humanity almost certainly also loses” - because the policies are almost guaranteed not to be sufficient to make a difference in the “existential risk” case to avoid said existential risk.

So the only winners are the leftists attaining and holding public power by pointing to this shibboleth which even in the very unlikely case that it does happen they will not stop.